No one denies the exceptional fluency of Large Language Models (LLMs). They generate human-like text in seconds. However, like every other tech, they also have severe limitations. Most of the time, the output we receive contains incorrect facts or omits new data. Such an obvious alteration in facts means you cannot fully trust their output in business applications.

Retrieval Augmented Generation (RAG) fixes that problem. The framework connects the LLM to an external and up-to-date knowledge base. This way, the model looks up precise information before generating an answer that is not just trustworthy but also citable.

Our blog will walk you through the entire Retrieval Augmented Generation mechanism. We will detail different RAG architectures, use cases, and limitations. You will also see the top RAG tools that companies use to make AI more factual and relevant.

What is Retrieval Augmented Generation (RAG)?

Retrieval-Augmented Generation is a method that combines information retrieval with text generation to improve the accuracy and reliability of large language models (LLMs).

Instead of relying only on what a model learned during training, it links the LLM to an external, up-to-date knowledge retrieval pipeline. When a user asks a question, the system first retrieves relevant documents from a custom knowledge base to generate the final answer.

The idea first appeared in 2020 when researchers at Meta AI introduced the concept in a paper on knowledge-intensive natural language tasks. The goal was to create grounded AI systems that reduce hallucination and explain answers with evidence.

This two-step approach radically changes how LLMs operate. It shifts them from pure knowledge recall systems to intelligent information agents grounded in real data.

AI Hallucinations destroy user trust.

RevAI creates Retrieval Augmented Generation systems for grounded and factual output.

Book Your Call NowWhat Are the Different Types of Retrieval Augmented Generation?

RAG is not a single design. Different generative AI architectures support various needs depending on data type, scale, and accuracy goals.

Naive RAG: The Foundational Architecture

Naive RAG represents the basic pipeline: Indexing, Retrieval, Augmentation, and Generation. It is the easiest form of RAG to implement.

The strength of Naive RAG lies in its simplicity and effectiveness for small, clean datasets. Its limitation is that it does not account for complex user queries or noise in the document chunks.

Modular and Agentic RAG

Modular RAG introduces optional steps and logic gates into the pipeline. It allows the system to decide whether to use semantic search, metadata filters, or even a different LLM for rephrasing the user query.

Agentic RAG takes this flexibility further by adding reasoning loops where the model can plan multiple retrieval steps. It acts more like an AI agent that runs separate retrieval steps for each sub-question before combining the results. This improves the depth of the overall answer.

Graph RAG

Graph-based RAG connects related documents using knowledge graphs. Instead of treating information as flat text, it maps how topics and entities relate. This structure helps the retriever understand context, reducing irrelevant or partial results. Graph RAG is highly effective in domains like finance or life sciences, where relationships between data points are critical.

Advanced RAG Architectures

Optimizing the RAG pipeline for production readiness and consistency is often referred to as RAG Ops. These advanced techniques required custom NLP to overcome the inherent limitations of simple vector search.

Pre-Retrieval Optimization Techniques

Pre-retrieval techniques focus on improving the quality of the index and the incoming query before the search happens. Techniques like Document Hierarchy create parent-child relationships between document chunks to retrieve a precise child chunk, but use the comprehensive parent chunk for context injection.

Post-Retrieval Optimization Techniques

Post-retrieval methods refine the search results after they come back from the vector database. The most common technique is using a re-ranking model. It takes the top 50 retrieved chunks and re-sorts them.

Then, the model places the most relevant chunks at the very top of the list for the LLM to mitigate the missed top documents issue.

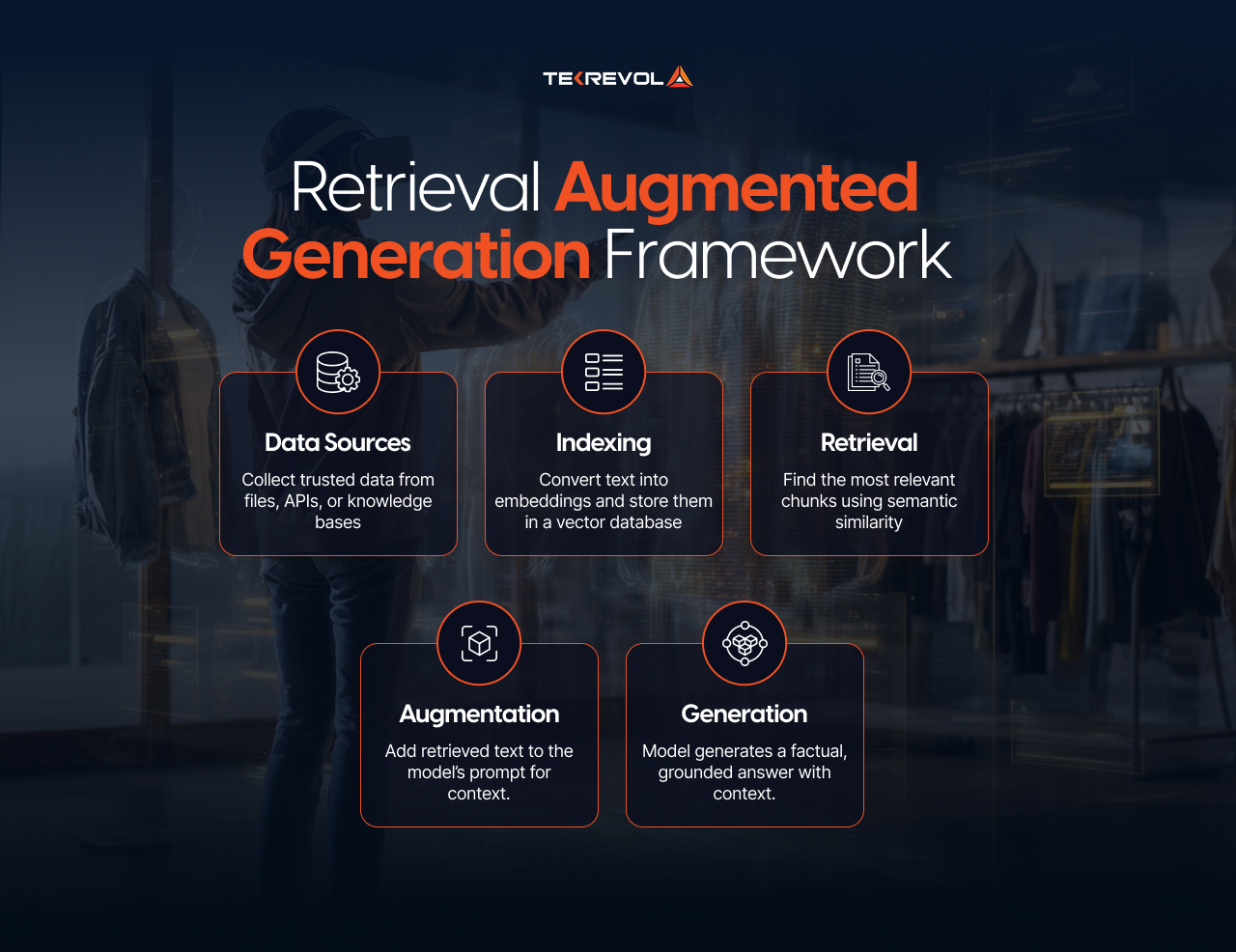

How Does Retrieval Augmented Generation Work?

The retrieval augmented generation workflow is a four-stage process. It starts when a user sends a query and ends when the model generates an answer that includes facts from external data.

1. Indexing (The Preparation Phase)

Indexing is the process of preparing your data and making it searchable. It involves loading documents. Then it splits them into manageable chunks, a process called document chunking.

Next, the system uses an embedding model to convert these text chunks into numerical vector embeddings, which are stored in a specialized vector database.

Indexing is critical because the quality of the documents and their vector representations directly determines the accuracy of the retrieval step later on.

2. Retrieval (The Search Phase)

Retrieval is the mechanism that converts the user’s question into a vector and uses it to search the indexed vector database.

The system uses the same embedding model to vectorize the user’s query. It then performs a vector similarity search against all the stored document vectors to find the top-most semantically relevant text chunks, even if they do not share exact keywords.

This step identifies the most relevant context from your external knowledge base that can answer the user’s request. The retrieved text is not the answer itself, but the raw material for the next stage.

3. Augmentation (The Context Phase)

Augmentation, also known as prompt construction, involves creating a new prompt for the Large Language Model.

The system combines the original user query with the retrieved text chunks and a specific system prompt or instruction. The instruction usually tells the LLM to “Act as an expert” and “Use only the provided context to answer the question.”

This augmented prompt gives the LLM everything it needs to generate an accurate response confined to the provided facts. ML developers often use LangChain or LlamaIndex to orchestrate this step.

4. Generation (The Synthesis Phase)

Generation is where the LLM synthesizes the final answer using the provided context. The LLM receives the augmented prompt and uses its reasoning abilities to generate a response.

Since it includes real-time data, the output is more accurate and transparent. Many RAG systems also display citations or source snippets to build user trust.

This complete four-step cycle is the foundation of building successful retrieval augmented generation applications.

What are the Benefits of Retrieval Augmented Generation?

The architectural shift to RAG delivers numerous practical and business-critical AI advantages over relying on ungrounded LLMs.

Hallucination Reduction

It offers factual grounding by retrieving information from reliable sources before responding. It cuts down on hallucinations and makes AI answers explainable with citations.

Access to Live and Proprietary Data

LLMs are limited by their Training Cutoff date. RAG improves it by giving access to live or proprietary data. Enterprises can connect RAG to internal documents or knowledge bases to serve employees or customers.

Lower Training Costs

Unlike fine-tuning, RAG does not require massive re-training of the entire LLM. New data only requires re-indexing the vector database, which is fast and inexpensive.

Cost-Effective Customization

RAG is also cost-effective. Instead of retraining a model every time data changes, you can update the retriever index. This approach lowers infrastructure costs while keeping information current.

Explainability and Citations

Finally, RAG builds trust through transparency and controllability. Businesses decide which sources feed their AI, ensuring responses align with their standards and compliance rules.

How Does Retrieval Augmented Generation Compare to Other AI Techniques?

The choice between retrieval augmented generation and other AI methods like Fine-Tuning or simple prompt engineering is a strategic one. Both enhance the LLM, but they serve different intents.

Comparison of LLM Customization Methods

| Feature | Retrieval Augmented Generation (RAG) | Fine-Tuning (FT) | Traditional LLM |

| Data Updating | Dynamic (Update index instantly) | Requires retraining (Slow/Expensive) | Static (Frozen at training) |

| Knowledge Type | New, external facts | Internalized style and specific skills | General world knowledge |

| Cost | Low

(Indexing cost only) |

High

(Training GPU hours) |

Moderate

(Inference cost only) |

| Accuracy Boost | High (if retrieval is relevant) | Medium–High (Improved domain reasoning) | Varies (High for general tasks) |

| Ideal For | Knowledge retrieval | Domain specialization | General tasks |

How RAG Complements Fine-Tuning?

RAG and Fine-Tuning are not mutually exclusive, but they work best in hybrid LLM architecture systems. Fine-tuning adapts a model’s tone or style, while RAG ensures factual grounding. Many organizations fine-tune their LLM for brand language but use RAG to connect it with internal knowledge bases.

How RAG Differs from Plug-ins and APIs?

Plug-ins and APIs development call specific services to perform actions. RAG, on the other hand, retrieves and reasons over content. It doesn’t just fetch data but understands and integrates it into responses.

How to Build a RAG System? (Step-by-Step Overview)

To implement retrieval augmented generation, follow the given six-step process. This structure helps ensure your knowledge retrieval pipeline is robust and scalable.

- Choose a data source and structure: Select where your knowledge will come from. It can be company files, product FAQs, or public databases.

- Create vector embeddings: Use an embedding model like OpenAI Embeddings or Sentence Transformers to convert text into vectors.

- Configure retriever logic: Store vectors in a database such as Pinecone or SingleStoreDB. Set parameters for similarity scoring and top-K retrieval.

- Augment the prompt with retrieved data: Use AI frameworks like LangChain or LlamaIndex to combine retrieved text with the user’s query.

- Generate the grounded output: Pass the combined prompt to an LLM for generation. The model now writes with real context.

- Evaluate and refine: Test how accurate and relevant responses are. Adjust chunking, embedding, or ranking logic to improve results.

Turn RAG blueprints into working systems.

RevAI helps you build Retrieval Augmented Generation frameworks that scale with your data.

Get Your Roadmap TodayRAG Tech Stack

Building a production-grade RAG system requires integrating specialized tools for each pipeline stage. The most common stack includes:

- LangChain for orchestration

- LlamaIndex for indexing and document management

- Pinecone or Weaviate for vector storage

- AWS Kendra or Bedrock for retrieval at scale

- Google Vertex AI Search for semantic ranking

- NVIDIA NeMo Guardrails for response moderation

- SingleStoreDB for real-time vector operations

Each tool has unique strengths, and many AI development companies mix several to fit their architecture.

What are the Applications of Retrieval Augmented Generation?

The ability of RAG to blend the fluency of LLMs with real-time, factual data unlocks numerous generative AI use cases across nearly every industry.

- Enterprise search assistants use RAG to answer employee queries from internal documents or knowledge bases. It cuts search time and keeps answers accurate.

- Healthcare knowledge retrieval systems combine patient data with medical literature, helping clinicians find evidence-based answers.

- Financial and legal document analysis tools rely on RAG to navigate large collections of reports, contracts, and policies. They summarize findings and reference the sources for compliance.

- Educational software systems use RAG to personalize learning materials by connecting lessons with recent research or datasets.

- Customer service chatbots powered by RAG provide precise answers from product manuals, FAQs, and support logs.

- Developer tools and code assistants link to documentation and repositories to generate grounded responses about frameworks or APIs.

These applications show that RAG is more than a research concept. It’s already shaping real business workflows across industries such as finance, healthcare, education, and technology.

What are the Limitations of Retrieval Augmented Generation?

Every technology has constraints, and RAG is no exception. Understanding its limitations helps design better systems.

1. Retrieval Quality Dependence

The biggest vulnerability in any RAG system is the Garbage In, Garbage Out principle. The quality of the final response depends on the quality of the retrieved documents. If the retrieval component fails to find the most relevant information, the LLM will provide an incomplete answer.

2. Latency and Performance Overhead

Because RAG is a multi-step process, it introduces inherent latency compared to a single LLM call. When not optimized, it can slow down responses, especially with large datasets or complex queries.

3. Context Limitations

Even with better retrieval, large language models still have context window limits. Only a certain amount of text fits into each prompt, which can restrict how much information the model can process at once. This forces the system to truncate information.

4. Data Privacy and Compliance

Implementing RAG often involves indexing vast amounts of sensitive or proprietary data, which introduces serious data privacy and compliance challenges. Organizations must manage access controls at the document chunk level and follow data protection policies.

5. Evaluation and Grounding Difficulty

Measuring the true accuracy of a RAG system is complex. Traditional metrics only measure linguistic quality. This difficulty in evaluation makes refining and optimizing the RAG pipeline a complex engineering task.

What is the Future of Retrieval Augmented Generation?

The next few years will bring major improvements to RAG systems. One major trend is the Integration with Autonomous AI Agents. Future systems will be self-directed, deciding which knowledge source to query, how to reformulate the question, and when to ask for clarification.

Multimodal AI Retrieval is also becoming standard. Instead of just text, RAG will retrieve context from images, videos, and code simultaneously, leading to richer context engineering.

Further developments include on-device retrieval or edge RAG to help privacy-sensitive industries use local data without sending it to cloud models. Personalized RAG will adapt retrieval and generation based on user preferences, making outputs more relevant.

Regulators also focus on explainable AI, requiring transparency in how data is sourced and used. Gartner predicts that by 2026, 80% of enterprise AI systems will integrate RAG workflows. This highlights the architectural shift toward reliable AI systems.

Conclusion

Retrieval Augmented Generation (RAG) marks a turning point in how AI learns and communicates. Instead of relying on static memory, it connects to live information. That change helps build grounded and reliable AI.

Enterprises and researchers already use RAG-based solutions to make applications smarter and more transparent. With stronger tools and architectures emerging, its role as a hybrid LLM architecture will only grow in the coming years.

If you want to explore RAG within your digital ecosystem, RevAI is at your disposal. Our Gen AI augmented services can help you plan and deploy solutions that use retrieval-augmented generation to add real value.

Don't just read about RAG.

Partner with RevAI to design your verifiable AI systems today.

Claim your free consultation.